Uncertainty Quantification in Deep Learning

Check our latest papers:

- ICML 2026: GPan-LoRA: Gaussian Process Amortized Networks for Bayesian Low-Rank Adaptation in Large Language Models

- ICML 2026: SIKA-GP: Accelerating Gaussian Process Inference with Sparse Inducing Kernel Approximations for Bayesian Deep Learning

- AISTATS 2025: From deep additive kernel learning to last-layer Bayesian neural networks via induced prior approximation

Motivation: Can we keep the principled uncertainty and structure of GP, but make it behave more like a scalable BNN?

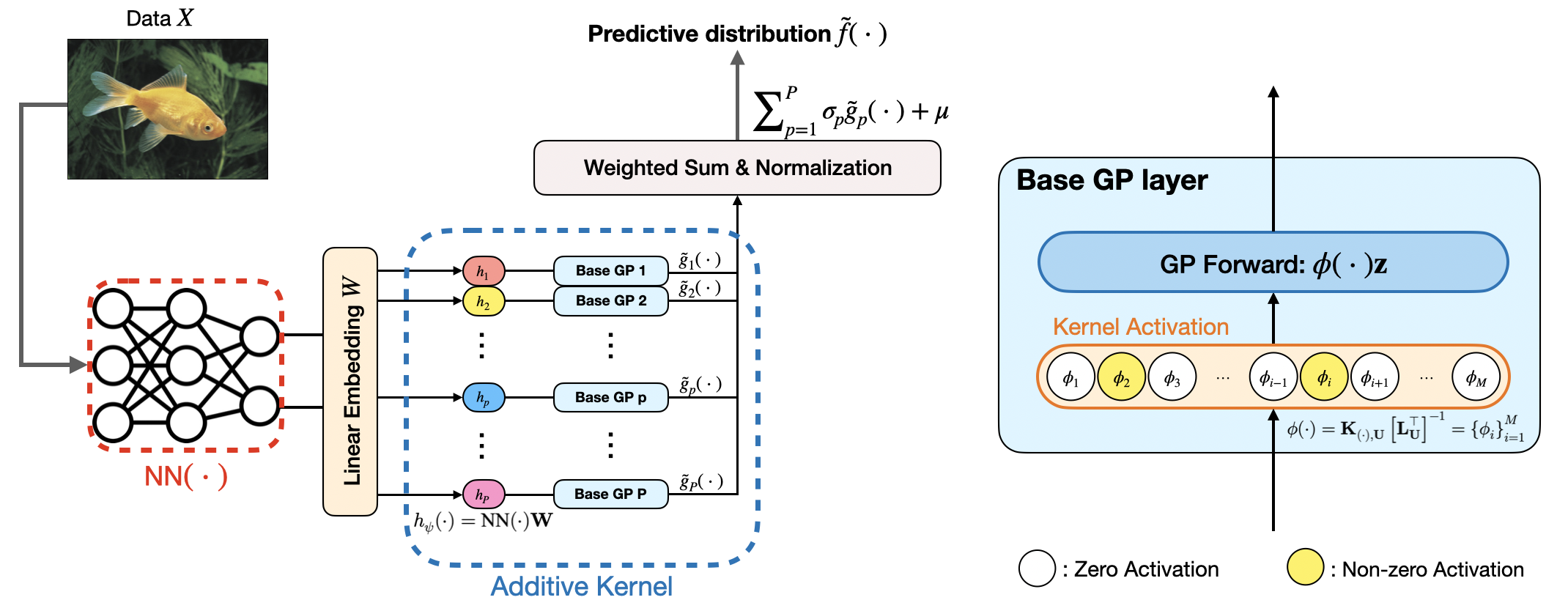

DAK: from Deep Kernel Learning to last-layer Bayesian Neural Networks

Takeaway:

- The additive structure gives a cleaner way to scale DKL to high-dimensional learned features. Instead of asking one GP to model everything jointly, DAK breaks the last layer into manageable 1D pieces.

- The induced prior approximation is built on fixed induced grids rather than learned inducing locations. That makes the model much easier to implement, and it also leads naturally to the last-layer BNN interpretation.

- DAK2BNN package is available at https://github.com/warrenzha/dak2bnn

Let the neural network produce a feature map \(h_\psi(x) \in \mathbb{R}^P\). Instead of placing one GP on the full feature vector, DAK decomposes the last layer into a sum of one-dimensional GP units:

\[f(x) = \sum_{p=1}^P \sigma_p \, g_p\!\left(h_\psi^{[p]}(x)\right) + \mu, \quad g_p \sim \mathcal{GP}(0, k_p).\]Deep additive kernel: additive GPs + deep neural features.

\[k_{\mathrm{DAK}}(x,x') = \sum_{p=1}^P \sigma_p^2 \, k_p\!\left(h_\psi^{[p]}(x), h_\psi^{[p]}(x')\right).\]The key modeling choice is that each GP unit now lives in 1D. That makes it possible to use a fixed interpolation grid with a sparse Markov structure, instead of carrying a large high-dimensional space.

From additive GPs to BNNs: The second key step is the induced prior approximation. For each 1D GP, the paper uses a fixed grid \(U\) and approximates the prior as

\[\tilde g_p(\cdot) = K(\cdot, U) K_{U,U}^{-1} g_p(U) = \phi(\cdot) z_p, \quad z_p \sim \mathcal{N}(0, I).\]Plugging this into the additive model gives

\[\tilde f(x) = \sum_{p=1}^P \sigma_p \, \phi\!\left(h_\psi^{[p]}(x)\right) z_p + \mu.\]This is the bridge I like most in the paper: after approximation, the last GP layer becomes a Bayesian last layer with kernel activations and Gaussian weights. So DAK is not just “NN + GP” - it is a hybrid architecture that can be trained like a neural network while still preserving a clear kernel interpretation.

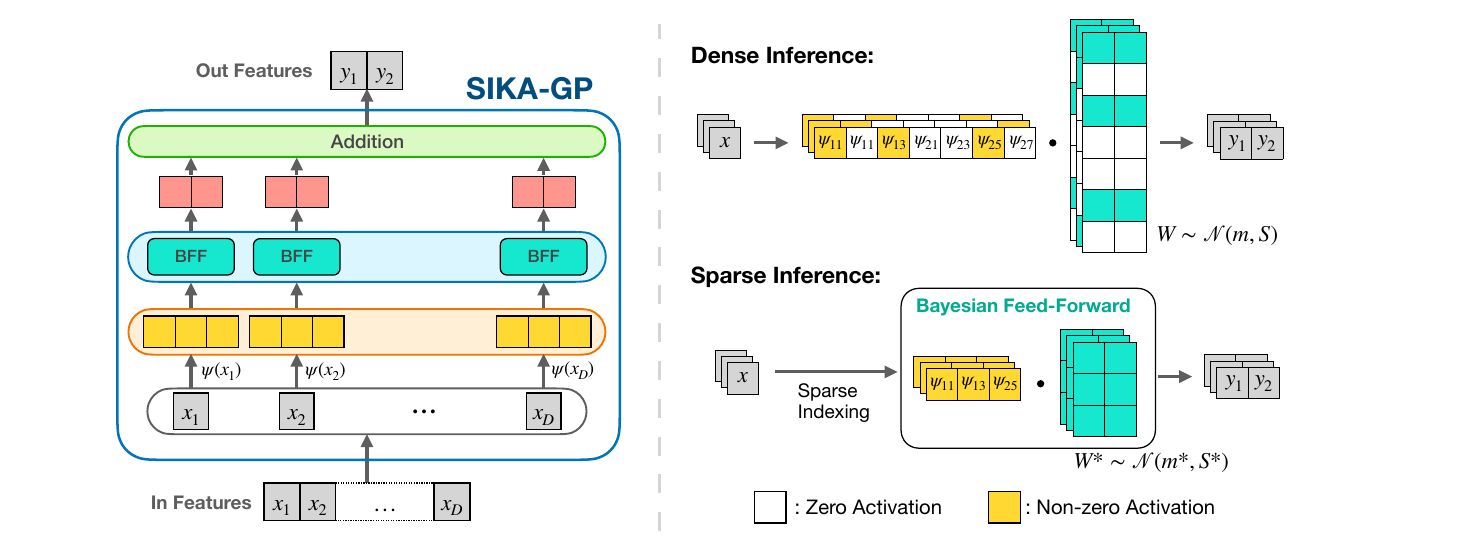

SIKA-GP: Accelerating Gaussian Process Inference with Sparse Inducing Kernel Approximations for Bayesian Deep Learning

Takeaway:

- SIKA-GP turns inducing-kernel GP inference into a sparsely activated Bayesian neural layer.

- With \(M=2^L+1\) inducing points, each input only activates \(O(\log M)\) basis functions, which makes training and inference much cheaper.

- This sparse structure is especially useful for DGP and DKL models, where GP layers sit on top of high-dimensional neural features and repeated Monte Carlo sampling can otherwise be expensive.

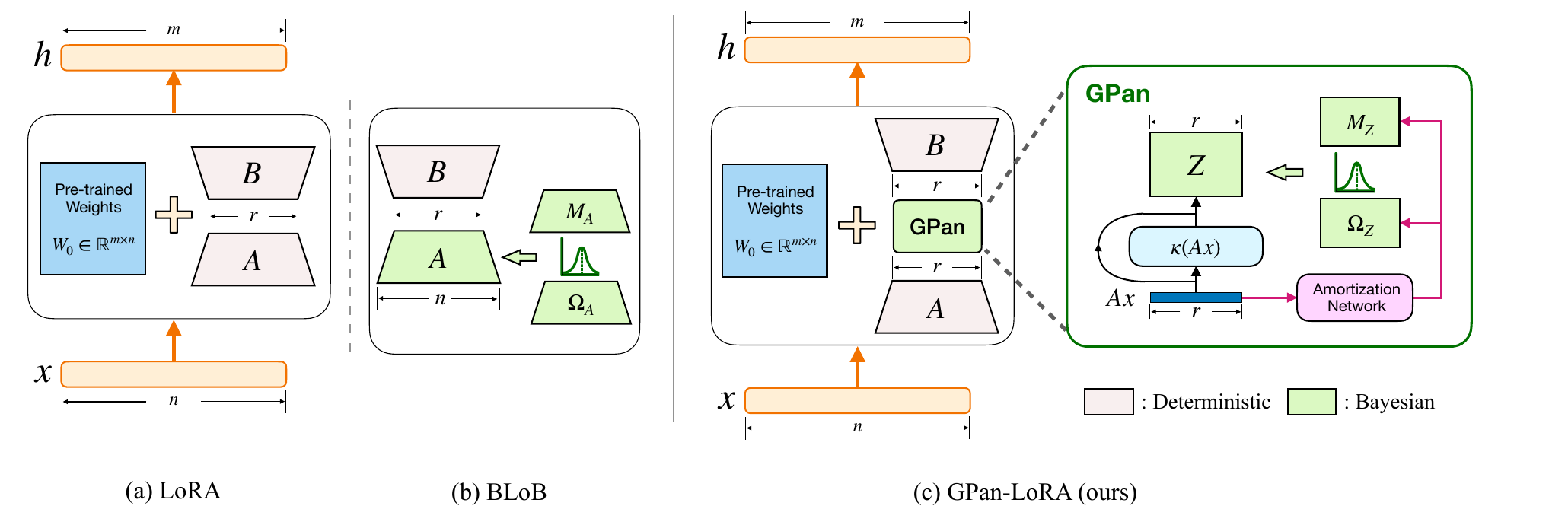

GPan-LoRA: Gaussian Process Amortized Networks for Bayesian Low-Rank Adaptation in Large Language Models

Takeaway:

- GPan-LoRA keeps the parameter efficiency of LoRA while adding a GP-style Bayesian residual in the low-rank adaptation space.

- The Bayesian variables scale with the compact latent GP module, roughly $O(\ell r^2)$, instead of the full LoRA input dimension $O(nr)$.

- Better uncertainty estimates across various LLM backbones and different use cases, especially when distribution shift matters.

LoRA fine-tunes a frozen weight matrix by adding a low-rank update,

\[h = (W_0 + \Delta W)x = W_0x + BAx,\]where $A \in \mathbb{R}^{r\times n}$, $B \in \mathbb{R}^{m\times r}$, and $r \ll \min{m,n}$. This is parameter-efficient, but the standard LoRA adapter is deterministic. Bayesian LoRA methods add uncertainty by placing distributions over adapter parameters, yet directly Bayesianizing LoRA factors can still be costly or poorly calibrated under distribution shift.

GPan-LoRA takes a function-space view. It writes the adapted hidden representation as

\[h = f_0(x) + f_\Delta(x), \qquad f_\Delta(\cdot) = g(\cdot) + \mu(\cdot),\]where $f_0$ is the frozen pretrained model and $g(\cdot)$ is a zero-mean Gaussian process. The key idea is that the GP is not placed on the full feature $x \in \mathbb{R}^n$. Instead, it is placed on the low-rank LoRA feature

\[\tau(x) = Ax \in \mathbb{R}^r.\]So the Bayesian part lives in the compact rank-$r$ adaptation space rather than the full model dimension.

Low-rank GP adapter: The low-rank GP view is justified when the kernel can be written through projected features,

\[k(x,x') = \tilde{k}(Ax,Ax').\]For example, a squared exponential kernel with a low-rank lengthscale matrix can be written as

\[k_{\mathrm{SE}}(x,x') = \sigma^2\exp\left(-\frac{1}{2}\|Ax-Ax'\|^2\right) = \pi_{\mathrm{SE}}(Ax,Ax').\]This means the GP residual can be indexed by $Ax$ without losing the intended function-space interpretation.

To make the GP scalable, GPan-LoRA uses an inducing-point approximation. For the projected feature $Ax$, the residual is approximated by

\[g(Ax) \approx \sum_{i=1}^{r} z_i^\top \kappa(a_i^\top x, u),\]where $u$ are inducing points, $\kappa(\cdot)$ is a deterministic kernel feature map, and $z_i$ are Gaussian latent weights. This converts the sparse GP into a Bayesian hidden layer. After vectorizing the $r$ GP heads, the residual becomes

\[f_\Delta(x) = BZ\kappa(Ax) + \mu(Ax).\]With the paper’s residual mean and weight sharing, this can be written compactly as

\[f_\Delta(x) = BZ\bigl(\kappa(Ax) + PAx\bigr).\]Here, $A$ and $B$ are deterministic LoRA matrices, while $Z$ is the Bayesian GP-induced component.

Amortized variational inference: Instead of learning one global posterior for all inputs, GPan-LoRA uses a small amortization network to produce input-dependent variational parameters:

\[\theta = f^{\mathrm{inf}}_\phi(Ax), \qquad q_\phi(Z\mid x).\]This lets the model adapt its uncertainty to each input. Intuitively, unfamiliar inputs can receive broader posterior uncertainty, which helps reduce overconfident predictions under distribution shift.

The training objective is a variational free-energy loss,

\[\mathcal{L}(A,B,\phi) = -\mathbb{E}_{q_\phi}\bigl[\log P(D\mid A,B,\theta)\bigr] + \mathrm{KL}\bigl(q_\phi(\theta\mid x)\,\|\,p_\phi(\theta)\bigr).\]At test time, predictions are obtained by sampling from the learned variational posterior and averaging:

\[P(y^\ast\mid x^\ast,D) \approx \frac{1}{S}\sum_{s=1}^{S}p(y^\ast\mid x^\ast,Z_s).\]Enjoy Reading This Article?

Here are some more articles you might like to read next: