AI-driven Dynamic mmWave Network

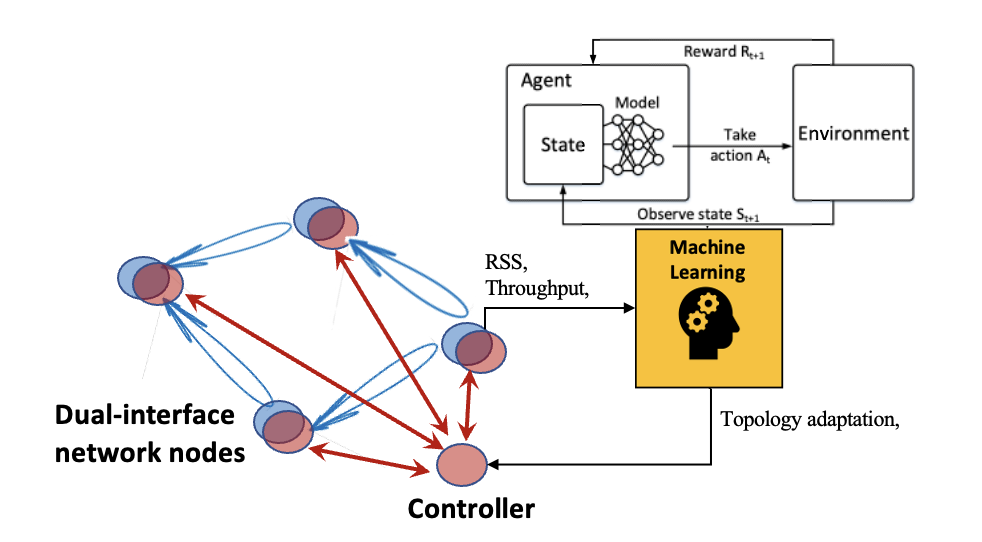

Dynamic mmWave Mesh Network

Millimeter-wavelength (mmWave) mesh network can provide multi-Gbps transmission but with large path loss and heterogeneous objectives which is hard to solve by heuristic models. Machine learning (ML) techniques, especially reinforcement learning (RL), have great potential in solving multi-objective, non-linear, and non-convex problems that often happen in mmWave mesh network configuration. On the other hand, network configuration policies learned from simulations cannot always help physical networks meet performance requirements due to sim2real gap. In this work, we develop a reinforcement learning (RL) model to train a policy for dynamic topology management and a self-supervised policy adaptation algorithm to bridge the domain gap. The experimental results shows that our RL agent can learn a policy to avoid blockage links and self-supervised learning model can help to eliminate domain gaps. The testbed we built can establish multiple routes and can be controlled effectively by a central controller. We successfully ran the simulation-trained RL policy and self-supervision agent on the real testbed.

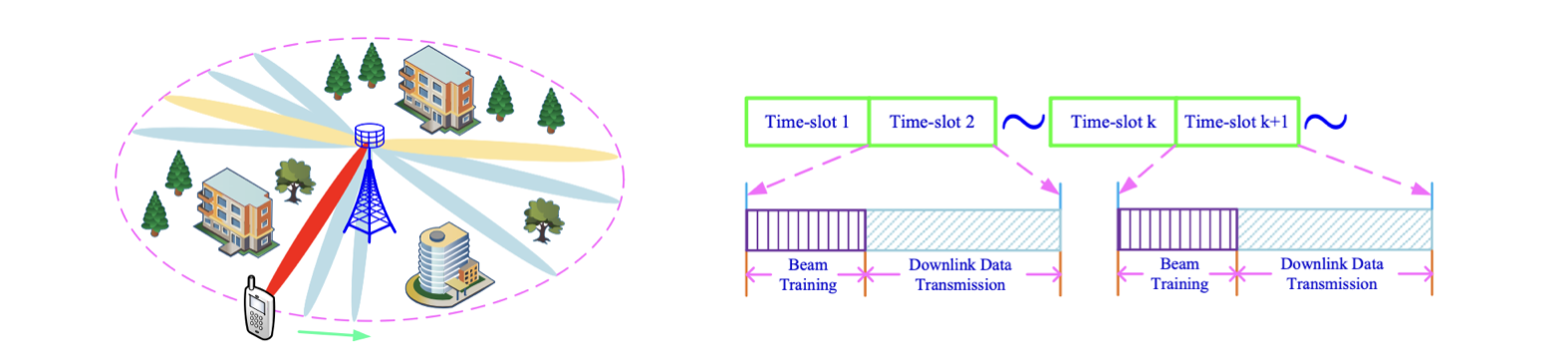

Beam Alignment and Tracking

Millimeter wave (mmwave) communications have attracted increasing attention thanks to the abundant spectrum resource. The short wave-length of mmwave signals facilitates exploiting large antenna arrays to achieve large array gains and combat large path-loss. However, the use of large antenna arrays along with narrow beams leads to a large overhead in beam training for obtaining channel state information, especially in dynamic environments. To reduce the overhead of beam training, we formulate the problem of beam alignment and tracking (BA/T) as a stochastic bandit problem. In particular, to sense the change of the environments, the actions are designed based on the offset of successive beam indexes (i.e., beam index difference), which measures the rate of change of the environments. Then, we propose two efficient BA/T algorithms based on stochastic bandit learning. To reveal useful insights, the performance of effective achievable rate is further analyzed for the proposed BA/T algorithms. The analytical results show that the algorithms can sense the change of the environments and adjust beam training strategies intelligently. In addition, they do not require any priori knowledge of dynamic channel modeling, and thus are applicable to a variety of complicated scenarios. Simulation results demonstrate the effectiveness and superiority of the proposed algorithms.

Enjoy Reading This Article?

Here are some more articles you might like to read next: